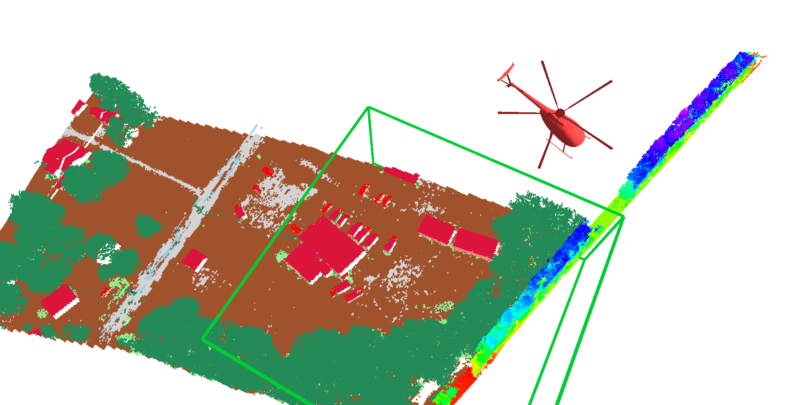

Online 3D Semantic Segmentation for Autonomous Helicopters

Advanced capabilities for autonomous aerial vehicles require an understanding of the environment that goes beyond geometric characteristics. For example, an autonomous helicopter's planning system may want to treat buildings and foliage differently when generating flight trajectories, to give buildings more clearance; or it may want to treat rocks and grass differently when searching for landing sites, as it is acceptable to land on the latter but not the former. To advance towards this goal we present a system for online semantic labeling of streaming LiDAR point cloud data. We demonstrate the system on aerial LiDAR data collected as part of the Autonomous Aerial Cargo Utility System (AACUS) project.